OpenAI’s language models GPT-2 and GPT-3 have revolutionized natural language processing (NLP) technology through their Transformer-based language models. When GPT-2 was first released in 2019 in OpenAI’s paper Language Models are Unsupervised Multitask Learners [1] it was groundbreaking, leading to extensions by Nvidia (Megatron-LM, 2020) and by Microsoft (Turing-NLG, 2020). Then in 2020, the GPT-3 model was released in OpenAI’s paper Language Models are Few-shot Learners [2]. With GPT-3, language models, for the first time, seemed capable of understanding natural language, and responding with results that were humans could not tell apart from the responses from real humans. From a ‘data mining’ perspective, these deep learning models are able to seemingly extract and comprehend information with a level of semantic complexity and accuracy that seems to go beyond prediction, beyond discovery, to (almost) understanding. After releasing GPT-2, OpenAI switched from being a non-profit to a for-profit corporation before completing GPT-3. Microsoft was impressed enough to invest a billion dollars into OpenAI after GPT-2 was released, gaining it exclusive rights to use GPT-3 commercially. In this review, I will summarize the two key papers by OpenAI that have motivated much of the recent R&D around notional.ai by East Agile.

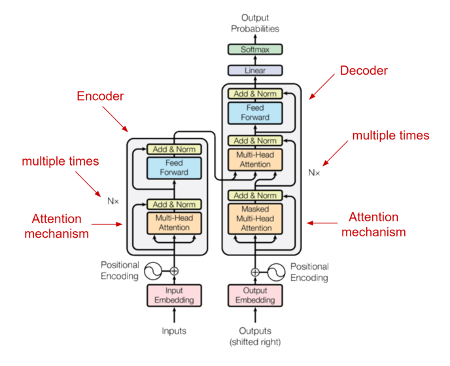

In Language Models are Unsupervised Multitask Learners OpenAI extends its earlier model, GPT, to GPT-2. They used effectively the same underlying technology of Transformers [3] which outperform LSTM CovNet models on language models. Transformers allow the model to look back arbitrarily far in the data to make sense of a language statement without using much memory. LSTMs were rather unique in allowing look back, but over a limited window for practical purposes.

But the story of GPT-2 is really one of data: a very large dataset was used. It consisted of 40 GiB of data, 8 million documents, all culled from a scrape of Reddit social media posts with top ratings (3 karma or above). This enabled models to be produced with parameter counts ranging from 117 million parameters (12 layers) to an unprecedented (at the time) 1.5 billion parameters (48 layers). The key insight was that the model continued to get more and more accurate as it became bigger, reaching state of the art. This might seem unsurprising, but other language models, such as BERT, start to become less accurate at a certain point in data size.

Another key innovation in the model is that the structure of its response can be tuned by prompting the model with a few examples. Automatically, without any tuning, without any parameter retraining, the model understands the new context and produces results. In practice, GPT-2 requires many examples, even hundreds of examples, to be properly prompted. But it can function with just a few, one, or even just a question. In the last case, these are described as Zero-shot results. The adaptability of the model earns it the description Unsupervised Multitask Learner. Key to this new approach is the assertion that given sufficient data, deep learning language models can become generalized in their capabilities. Up until about this point, it was understood that models needed to be trained on specific bodies of text for specific types of capabilities.

In Language Models are Few-shot Learners, OpenAI goes all out in producing GPT-3.

They expand the input data from just Reddit data, to include two collections of books, all of Wikipedia, and a massive web crawl. Their web crawl, called Common Crawl, makes up fully 60% of the new dataset. In their GPT-2 paper they described Common Crawl as having “significant data quality issues” and being “mostly unintelligible.” But they seem to have overcome this for GPT-3 (perhaps the billion dollars in funding from Microsoft helped).

All this extra data allows GPT-3 to expand to a breathtaking 175 billion parameters. The GPT-3 model uses the same attention-based architecture as GPT-2. But more data allows the use of more layers. The full GPT-3 model uses 96 attention layers, each with a 96x128 dimension head. GPT-3 expands on the GPT-2 model size by three orders of magnitude by using the same architecture, but adding more layers, and wider layers.

The resulting model much more closely achieves the capacity to do Zero-Shot or Few-Shot hinted at by GPT-2. Just ask a question, get an answer. Or provide one or a few examples, of what a prompt (question) and answer look like and continue. With no retraining. No re-tuning.

Figure 1 shows the general architecture (from Attention Is All You Need by Vaswani, et. al. [3]; OpenAI does not publish a diagram).

The papers provide some rather nice examples of the quality of output produced by their respective models. But secondary sources show more impressive results, from generating solutions to programming problems just from a problem statement, to creating spreadsheets, complete with data based just on a description, to question and answer (both in complete English). Some of the more exciting examples are entire sections of papers (like this one) written entirely by GPT-3 with just the title as a prompt. It will not take more than a few years before businesses can query a language model and download a unique fully researched and written paper a few seconds later – that is the vision for notional.ai.

References

[1] A. Radford, J. Wu, R. Child, D. Luan, D. Amodei, H. Sutskever, “Language Models are Unsupervised Multitask Learners,” OpenAI, 2019.

[2] T. B. Brown, B. Mann, N. Ryder, M. Subbiah, and J. Kaplan, “Language Models are Few-Shot Learners,” OpenAI, Jun. 2020.

[3] A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. Gomez, L. Kaiser, and I. Polosukhin, “Attention is all you need”, In Advances in Neural Information Pro- cessing Systems, pages 6000–6010, 2017